If you don't care for the rationale or technical details discussed in this blog, the API is currently available free of charge and can be accessed here: https://sonar.omnisint.io

Additionally, a command-line client has been implemented, which can be obtained by running the following command:

go get github.com/cgboal/SonarSearch/crobat-client

crobat-client --init

Ok, but why?

Project Sonar, developed by Rapid7, is an internet-wide survey that aims to gather large amounts of information such as port scan data, SSL certificates, and DNS records. Moreover, Rapid7 very generously makes these datasets available to Security Researchers at no additional cost. Find out more information regarding Project Sonar.

In this blog, we are primarily concerned with the DNS record data collected by Project Sonar. The DNS data is represented in JSON, and the dataset for A records alone is made up of 183GB of JSON data and is updated approximately every week. As you can imagine, this quantity of DNS data makes for an excellent resource when performing DNS reconnaissance activities.

For example, while you may use tools such as OWASPs Amass, or Subfinder for DNS enumeration, these tools can take a few minutes to run, and often require making many DNS requests (depending on configurations). Furthermore, the data retrieved from Project Sonar may differ from that which is discovered via the aforementioned tools, so having an additional data source is also welcomed but, more on this later.

The problem

Right, now that we're all in agreement that Project Sonar is awesome and we'd like to use it in our DNS enumeration activities, we're good to go... right? Well not quite, due to the size of the Project Sonar datasets, searching these datasets for DNS information can take an unrealistically long time (~20 minutes). Moreover, it can often be unrealistic to have access to a 183GB JSON file at any time, especially for road warriors working limited hard disk capacity.

Building a solution

To address these issues, I started by throwing all the Project Sonar data into MongoDB because it's well suited to retrieving records from large datasets, and it's super easy to import JSON data. However, once the data was imported it was still very, very slow to query.

Having not used MongoDB before, I was rather unimpressed and started researching optimizations. Very quickly I encountered the concept of creating Indexes within collections of data. Created Indexes can then be used to narrow down the search space within the collection when trying to retrieve records.

Indexing the data

In MongoDB, there are many different kinds of Indexes, including text indexes which allow data to be retrieved around ten times faster than would be possible with a regular expression search. Unfortunately, text indexes were still not fast enough to make an API based solution practical. The reason for poor performance was that it is much more difficult to find a substring when the substring you are searching for is not located at the start of the string being searched.

To this end, it made the most sense to use a segment of a domain name as the Index. In this instance, the domain name without a top-level-domain, or subdomains was chosen.

For example, if a domain in the database has the name:

www.calumboal.com

The Index value would be and shall be referred to as the domain_index from here on out:

calumboal

This particular substring of the fully qualified domain name was chosen as it allows searching for all TLDs of a given domain, as well as all subdomains.

To optimize queries which requested subdomains for both a domain, and TLD (e.g. all subdomains of onsecurity.co), a composite index was also created consisting of the domain, and TLD components.

To use the above domain_index format as an Index in MongoDB, the domain_index has to be extracted from each FQDN in the 183GB Project Sonar Database.

Stripping TLDs

To make matters worse, removing TLD's from Domain names is not as simple as it may seem. These days are over 4000 TLDs, and the number of components in them varies. For example, calumboal.com has only one TLD component whereas onsecurity.co.uk has two. Thus, there is no way to know how many components of an FQDN are used for the TLD without checking which TLD is in use.

My initial solution used Regular expressions to do a find and replace operation on a FQDN for each TLD (at least until a match was found). However, this proved to be extremely slow, with the processing of 100k FQDN's taking an average of 5 seconds, and this was after large amounts of concurrency had been introduced into the program executing the Regular Expression.

In the end, I changed tactics and implemented a suffix array search (similar to a Trie), which is capable of searching for string prefixes extremely quickly and efficiently. Trie searches can be easily implemented in Golang using suffix array package present within the standard library. As TLDs come at the end of a string, I split the last component of a FQDN and checked if it was a TLD. If it wasn't, I checked the last two components of the FQDN, etc, until a match was found. Once a match was found, the number of components that had been checked when the match was found was used to calculate the offset of the domain component within the FQDN.

Using this approach the time taken to process 100k domains was reduced from 5 seconds to 0.04. I have open-sourced the domain parser I wrote for this, it is capable of extracting subdomains, FQDN, and domain_indexes incredibly quickly. Check out the source code for the domain parser.

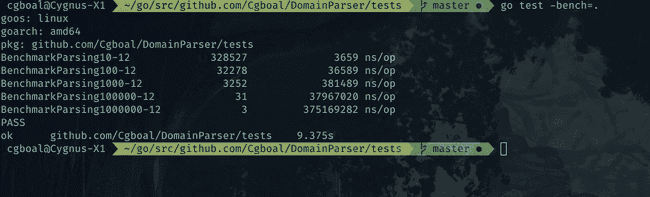

Below are the benchmarks calculated for the parser:

The above table shows that it took 0.036 seconds to parse 100k domains, and 0.36 seconds to parse 1 million domains.

With efficient parsing achieved, it was possible to generate the domain_index value every domain in Project Sonars A record Dataset in roughly 1-2 hours. Whereas it was estimated to take multiple weeks when using Regular Expressions.

A MongoDB importer for the Project Sonar A record Dataset was then created which enriched each record with the domain_index value on the fly and inserted the records into MongoDB. The speed of this importer was found to be similar to that MongoDB's native mongoimport utility, although perhaps a bit slower. Nevertheless, it is much faster to import the data directly into MongoDB after enriching it with the domain_index than writing the enriched JSON to a file and then importing it.

Check out the importer created for this project, along with the API described in the following section.

Writing an API

To make the newly indexed data accessible, a REST API was written in Go. The API allows the retrieval of subdomains for a specific FQDN, TLDs for a domain, and also all subdomains for any TLD of a given domain.

The implemented endpoints are as follows:

/subdomains/{domain.tld}

/tlds/{domain}

/all/{domain}

Performance

So, was all that effort actually worth it? Let's take a look at some benchmarks to compare the speed, accuracy, and completeness of results obtains from DNS enumeration tools.

For the sake of transparency, the running of each tool was timed, and then the results were resolved using ZDNS. The output of ZDNS was then filtered using jq, and the resolvable domain names were then sorted by unique. For example:

cat $method | zdns A -name-servers 1.1.1.1 | jq -r 'select (.data.answers[].answer != "") | .name' | sort -u | wc -l

spotify.com

| Method | #Domains | #Resolvable | Time |

|---|---|---|---|

| Crobat | 150 | 150 | 1.1s |

| DNSDumpster | 70 | 69 | 9.7s |

| Subfinder | 665 | 297 | 46s |

| Amass | 504 | 503 | 15m |

| Amass (passive) | 1107 | 164 | 1.5m |

google.com

| Method | #Domains | #Resolvable | Time |

|---|---|---|---|

| Crobat | 25537 | 23508 | 30s |

| DNSDumpster | 100 | 100 | 9s |

| Subfinder | 28922 | 23670 | 44m |

| Amass | 10965 | 10852 | 24h+ |

| Amass (passive) | 31684 | 23533 | 3m |

As can be seen above, while the Crobat API is significantly faster than other methods of subdomain discovery, the Sonar dataset does lack coverage in comparison to other tools. However, these approaches are not necessarily comparable, as Amass and Subfinder perform a much wider range of enumeration activities than simply pulling querying Sonar, and thus have greater datasets at their disposal.

Moreover, Amass also uses the Sonar data set as one of its sources, however, it fails to return results from Sonar when a domain with a significantly large number of subdomains is requested (i.e. zendesk.com). Clearly, in the second test case, Amass run without the -passive flag had some issues.

Now, we shall look at the results from the above tests and identify whether the Sonar dataset returned any results which were not identified by the other methods:

spotify.com

| Method | #Domains unique to Crobat |

|---|---|

| Subfinder | 0 |

| Amass | 9 |

| Amass (passive) | 0 |

google.com

| Method | #Domains unique to Crobat |

|---|---|

| Subfinder | 47 |

| Amass | 14589 |

| Amass (passive) | 58 |

So overall it appears that the benefit of using the Crobat API in conjunction with other tools when performing subdomain discovery varies depending on the target domain, providing that absolute coverage is your primary goal.

However, that is not to say there is no value in being able to quickly query such a large data source, especially if you would like a general overview of an organization's assets, as opposed to a comprehensive list.

Performance under load

While I do not have proper benchmarks for this, testing found that many clients could perform a large number of queries per second for different domains whilst still obtaining accurate results.

Doing a load test for a query of a single domain showed that the API was able to successfully handle an average of 700 requests per second. Although, the database does cache the results of lookup after the first query, so this is kind of irrelevant.

Caveats

Due to the current implementation of paging, retrieving all pages of extremely large queries can take an increasingly long time. For example, zendesk.com which has 100 million+ subdomains. I do aim to optimize this further in the future, however, if anyone has any ideas on how to increase performance in this area, give me a shout.

Conclusions

This project was a lot of fun to work on, especially optimizing the initial parsing of the dataset into an indexable format. I believe that the project has also achieved its goal of making Project Sonar searchable in a time-efficient manner.

However, the results of the comparisons to other subdomain discovery tools do show that it is best to use a variety of data sources when aiming for full coverage of an organization's digital footprint.

Nevertheless, I believe there is certainly value in being able to perform extremely quick queries of the Project Sonars dataset, especially when it comes to on the fly operations where doing active DNS enumeration would incur a significant bottleneck. Additionally, the ability to retrieve all entries for a given domain across all TLD's is also extremely valuable, and not possible out of the box using other DNS reconnaissance tools (as far as I'm aware).

Future Work

In the future, I intend to add an endpoint that allows you to retrieve all DNS entries with a given subdomain. For example, you could search for all domains which begin with Citrix., as I believe this would be valuable to researchers. However, this will likely have to wait until the pagination problem is resolved.

Additionally, I may add an option to return a count of all records which match a query, as counting the records is significantly faster than retrieving them, and this may prove useful for trend analysis or determining whether new entries are available for organizations which are being tracked.

Finally, I am considering performing active enumeration using other subdomain enumeration tools to enrich the data provided by the Crobat API from Rapid7's Project Sonar dataset. Primarily, this would be performed for bug bounty targets. However, I wouldn't hold your breath for this. If someone wants to handle the automation of active DNS enumeration, I am happy to write some endpoints which accept submissions to the database.